Virtual Memory

Dependable memory hierarchy

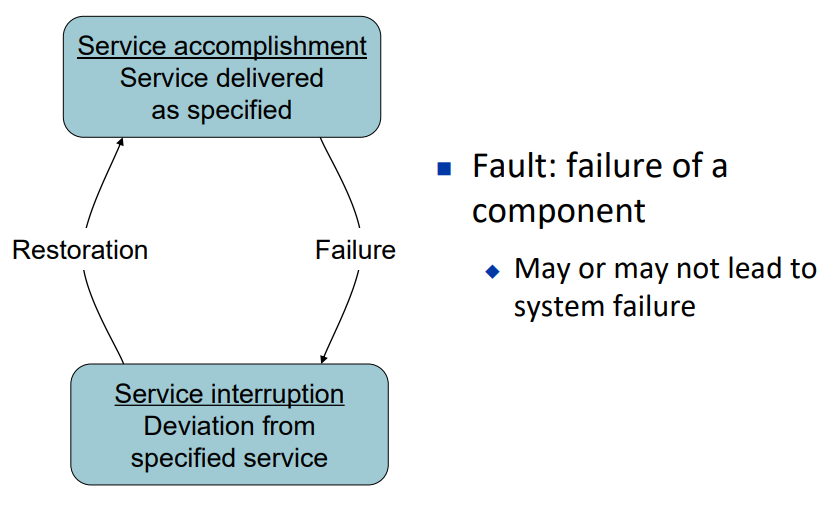

Dependability

Dependability Measures:

- Reliability: mean time to failure (MTTF)

- Service interruption: mean time to repair (MTTR)

- Mean time between failures

- MTBF = MTTF + MTTR

- Availability = MTTF / (MTTF + MTTR)

- Improving Availability

- Increase MTTF: fault avoidance, fault tolerance, fault forecasting

- Reduce MTTR: fault detection, fault diagnosis and fault repair

The Hamming SEC Code

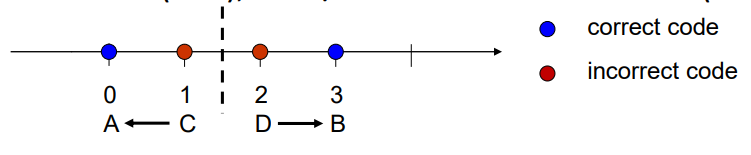

Hamming distance

- Number of bits that are different between two bit patterns

- E.g. use 111 to represent 1, use 000 to represent 0, hamming distance (d) is 3, d=3.

Minimum distance = 2 provides single bit error detection (SED)

- E.g. odd-parity code: 10 → 101, 11 → 110, d = 2

Minimum distance = 3

- single error correction(SEC)

- 2 bit/ double error detection (DED)

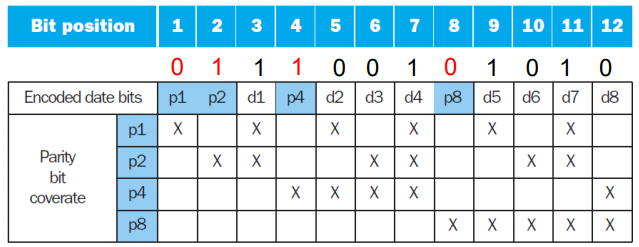

Encoding SEC

To calculate Hamming code:

Number bits from 1 on the left

All bit positions that are a power of 2 are parity bits (bit 1 2 4 8 are parity bits)

Each parity bit checks certain data bits:

!> 注意查验规律

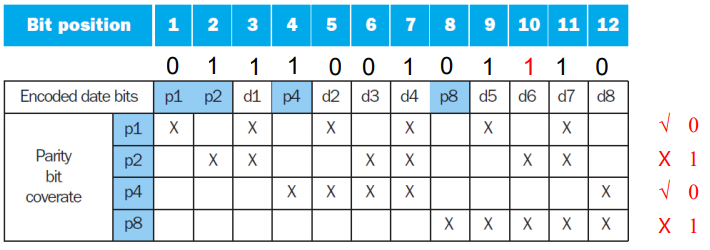

Decoding SEC

Value of parity bits indicates which bits are in error

- Use numbering from encoding procedure

- E.g.

- Parity bits = 0000 indicates no error

- Parity bits = 0101 indicates bit 10 was flipped

这里 10 = 2 + 8 (错误的 parity bit 下标)

parity bit 的值由其核验的位决定,1的个数必须为偶数,若为奇数则错误,记为1

parity bit 核验位数记忆规律:

- $p_1$: 核验二进制数中第一位为1的位数: 001, 011, 111, ...

- $p_2$: 核验二进制数中第二位为1的位数: 0010, 0011, 0110, 0111, ...

- ...

SEC/DED Code

- Add an additional parity bit for the whole word (pn)

- Make Hamming distance = 4

- Decoding:

- Let H = SEC parity bits

- H even, pn even, no error

- H odd, pn odd, correctable single bit error

- H even, pn odd, error in pn bit

- H odd, pn even, double error occurred

Note: ECC DRAM uses SEC/DED with 8 bits protecting each 64 bits

Virtual Memory

Use main memory as a “cache” for secondary (disk) storage

- Managed jointly by CPU hardware and the operating system (OS)

Programs share main memory

- Each gets a private virtual address space holding its frequently used code and data

- Protected from other programs

CPU and OS translate virtual addresses to physical addresses

- VM “block” is called a page

- VM “miss” is called a page fault

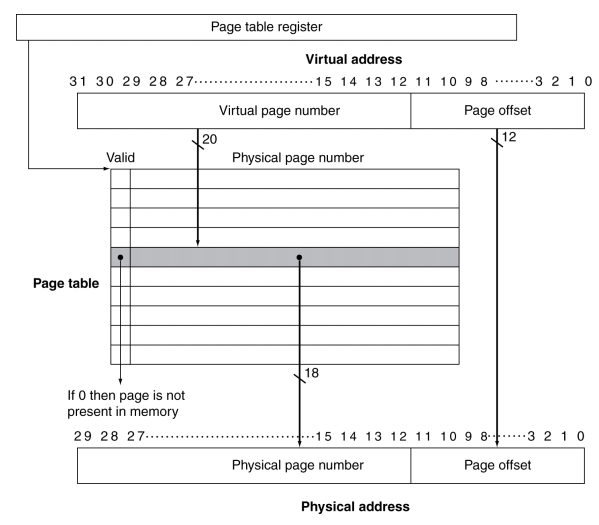

Address Translation

Fixed-size pages (e.g., 4K)

Page Fault Penalty

- On page fault, the page must be fetched from disk

- Takes millions of clock cycles

- Handled by OS code

- Try to minimize page fault rate

- Fully associative placement

- Smart replacement algorithms

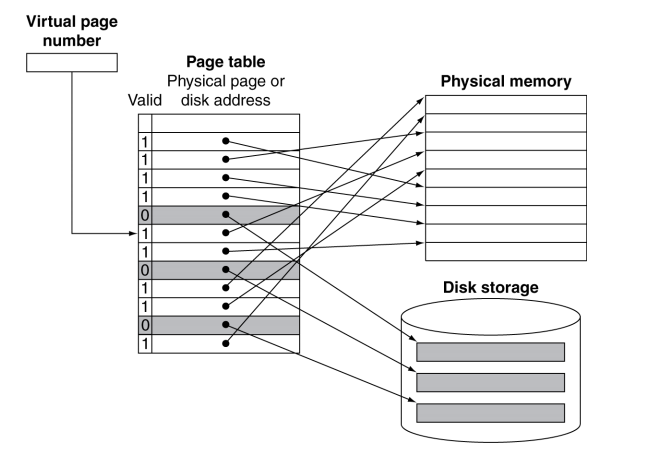

Page Tables

Where is the placement information? Page Table

- Array of page table entries (PTE), indexed by virtual page number

- Page table register in CPU points to page table in physical memory

Each program has its page table. Page table is in memory

If page is present in memory

- PTE stores the physical page number

- Plus other status bits (referenced, dirty, …)

If page is not present

- PTE can refer to location in swap space on disk

Mapping Pages to Storage

Replacement and Writes

- To reduce page fault rate, prefer least-recently used (LRU) replacement

- Reference bit (aka use bit) in PTE set to 1 on access to page

- Periodically cleared to 0 by OS

- A page with reference bit = 0 has not been used recently

- Disk writes take millions of cycles

- Block at once, not individual locations

- Use write-back, because write through is impractical

- Dirty bit in PTE set when page is written

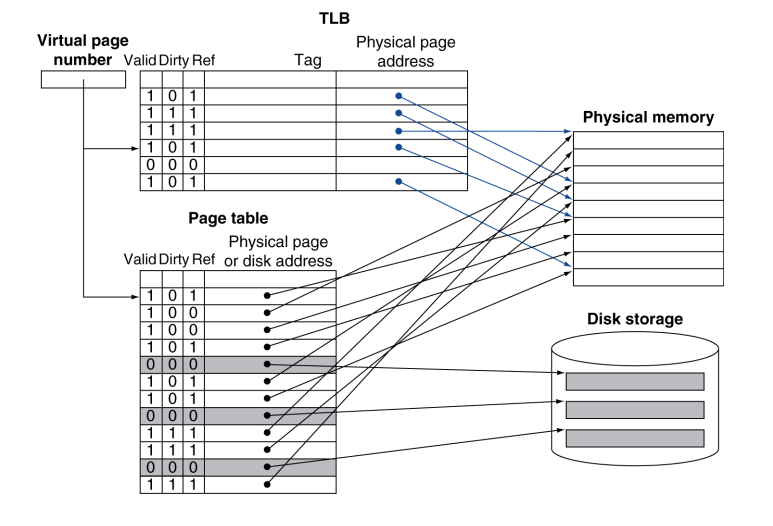

TLB

Fast Translation Using a TLB

- Since page table is in memory, every memory access by a program requires two memory accesses

- One to access the page table entry

- Then the actual memory access

- Can we move the page table to CPU?

- Yes, use a fast cache in CPU to store recently used PTEs, because access to page tables has good locality

- Called a Translation Look-aside Buffer (TLB) [fully associative]

- Typical: 16–512 PTEs, 0.5–1 cycle for hit, 10–100 cycles for miss, 0.01%–1% miss rate

- Misses could be handled by hardware or software

TLB Misses

- If page is in memory

- Load the PTE from memory and retry

- Could be handled in hardware

- Can get complex for more complicated page table structures

- Or in software

- Raise a special exception, with optimized handler

- If page is not in memory (page fault)

- OS handles fetching the page and updating the page table

- Then restart the faulting instruction

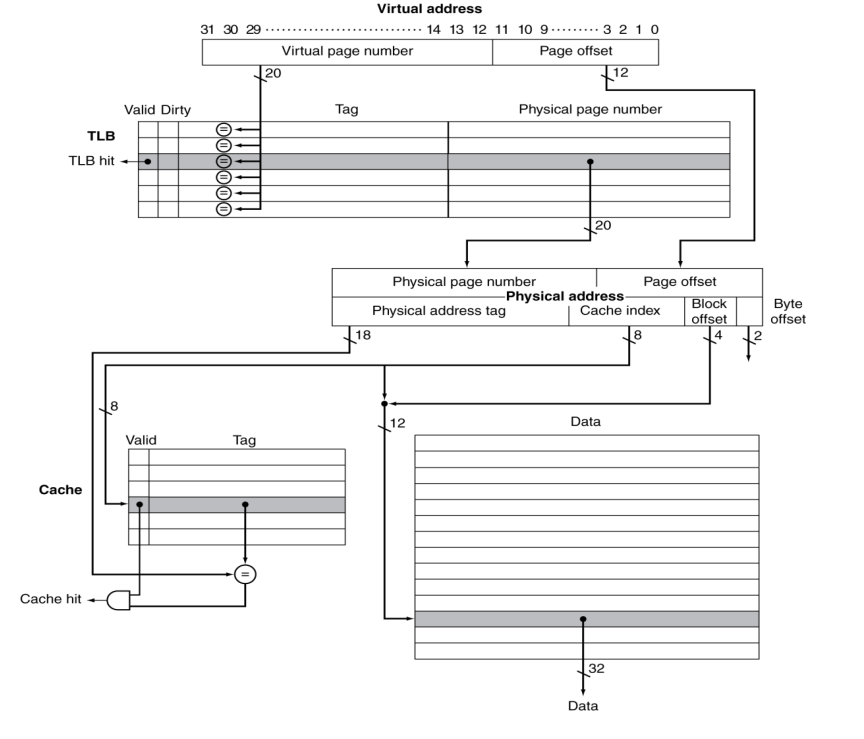

TLB and Cache Interaction

Memory Protection

- Different tasks can share parts of their virtual address spaces

- But need to protect against errant access

- Requires OS assistance

- Hardware support for OS protection

- Privileged supervisor mode (aka kernel mode)

- Privileged instructions

- Page tables and other state information only accessible in supervisor mode

- System call exception (e.g.,

syscallin MIPS)

Summery

The Memory Hierarchy

- Common principles apply at all levels of the memory hierarchy

- Based on notions of caching

- At each level in the hierarchy

- Block placement

- Finding a block

- Replacement on a miss

- Write policy

Block Placement

- Determined by associativity

- Direct mapped (1-way associative)

- One choice for placement

- n-way set associative

- n choices within a set

- Fully associative

- Any location

- Higher associativity reduces miss rate

- Increases complexity, cost, and access time

Finding a Block

AssociativityLocation methodTag comparisonsDirect mappedIndex1n-way set associativeSet index, then searchentries within the setnFully associativeSearch all entries#entriesFull lookup table0

- Virtual memory

- Full table lookup makes full associativity feasible

- Benefit in reduced miss rate

- Cache and TLB

- Set-associative, some cache uses direct map

Replacement

- Choice of entry to replace on a miss

- Least recently used (LRU)

- Complex and costly hardware for high associativity

- Random

- Close to LRU, easier to implement

- Virtual memory

- LRU approximation with hardware support

- Cache

- Both LRU and random is ok

Write Policy

- Write-through

- Update both upper and lower levels

- Simplifies replacement, but may require write buffer

- Write-back

- Update upper level only

- Update lower level when block is replaced

- Need to keep more state

- Virtual memory

- Only write-back is feasible, given disk write latency

Sources of Misses

- Compulsory misses (aka cold start misses)

- First access to a block

- Capacity misses

- Due to finite cache size

- A replaced block is later accessed again

- Conflict misses (aka collision misses)

- In a non-fully associative cache

- Due to competition for entries in a set

- Would not occur in a fully associative cache of the same total size

Cache Design Trade-offs

Design changeEffect on miss rateNegative performance effectIncrease cache sizeDecrease capacity missesMay increase access timeIncrease associativityDecrease conflict missesMay increase access timeIncrease block sizeDecrease compulsory missesIncreases miss penalty.For very large block size,may increase miss rate due to pollution.

Virtual Machines

- Host computer emulates guest operating system andmachine resources

- Improved isolation of multiple guests

- Avoids security and reliability problems

- Aids sharing of resources

- Virtualization has some performance impact

- Feasible with modern high-performance computers

Virtual Machine Monitor

- Maps virtual resources to physical resources

- Memory, I/O devices, CPUs

- Guest code runs on native machine in user mode

- Traps to VMM on privileged instructions and access to protected resources

- Guest OS may be different from host OS

- VMM handles real I/O devices

- Emulates generic virtual I/O devices for guest

Example: Timer Virtualization

- In native machine, on timer interrupt

- OS suspends current process, handles interrupt, selects and resumes next process

- With Virtual Machine Monitor

- VMM suspends current VM, handles interrupt, selects and resumes next VM

- If a VM requires timer interrupts

- VMM emulates a virtual timer

- Emulates interrupt for VM when physical timer interrupt occurs

Instruction Set Support

- Instruction Set Support

- Privileged instructions only available in system mode

- Trap to system if executed in user mode

- All physical resources only accessible using privileged instructions

- Including page tables, interrupt controls, I/O registers

- Renaissance of virtualization support

- Current ISAs (e.g., x86) adapting